I’ve been watching the AI space like a hawk for months, and lately, something has felt… off.

We have four giant language models (LLMs) like GPT-4, Claude, Gemini, and Llama 4 that can write poetry, code websites, and summarize documents in seconds. They’re amazing. But when you ask them to do something that requires real, deep reasoning —the kind of thinking that involves planning, backtracking, and trying again —they fall apart.

They try to solve it with something called “Chain-of-Thought” (CoT), which is a method that involves the model talking itself through the problem. However, this approach is found to be brittle. One wrong step in the “chain,” and the whole thing collapses. Moreover, it requires a large amount of data, which makes the models slow and expensive, making them unsuitable for complex, deep reasoning tasks.

I was starting to think AI had hit a wall. A very, very big wall.

But then I came across a research paper that completely shifted my perspective. It’s called the “Hierarchical Reasoning Model” and, honestly, it might be one of the most important AI papers you’ve never heard of. This model could potentially revolutionize the field of AI by overcoming the limitations of current models and paving the way for more advanced and efficient AI systems.

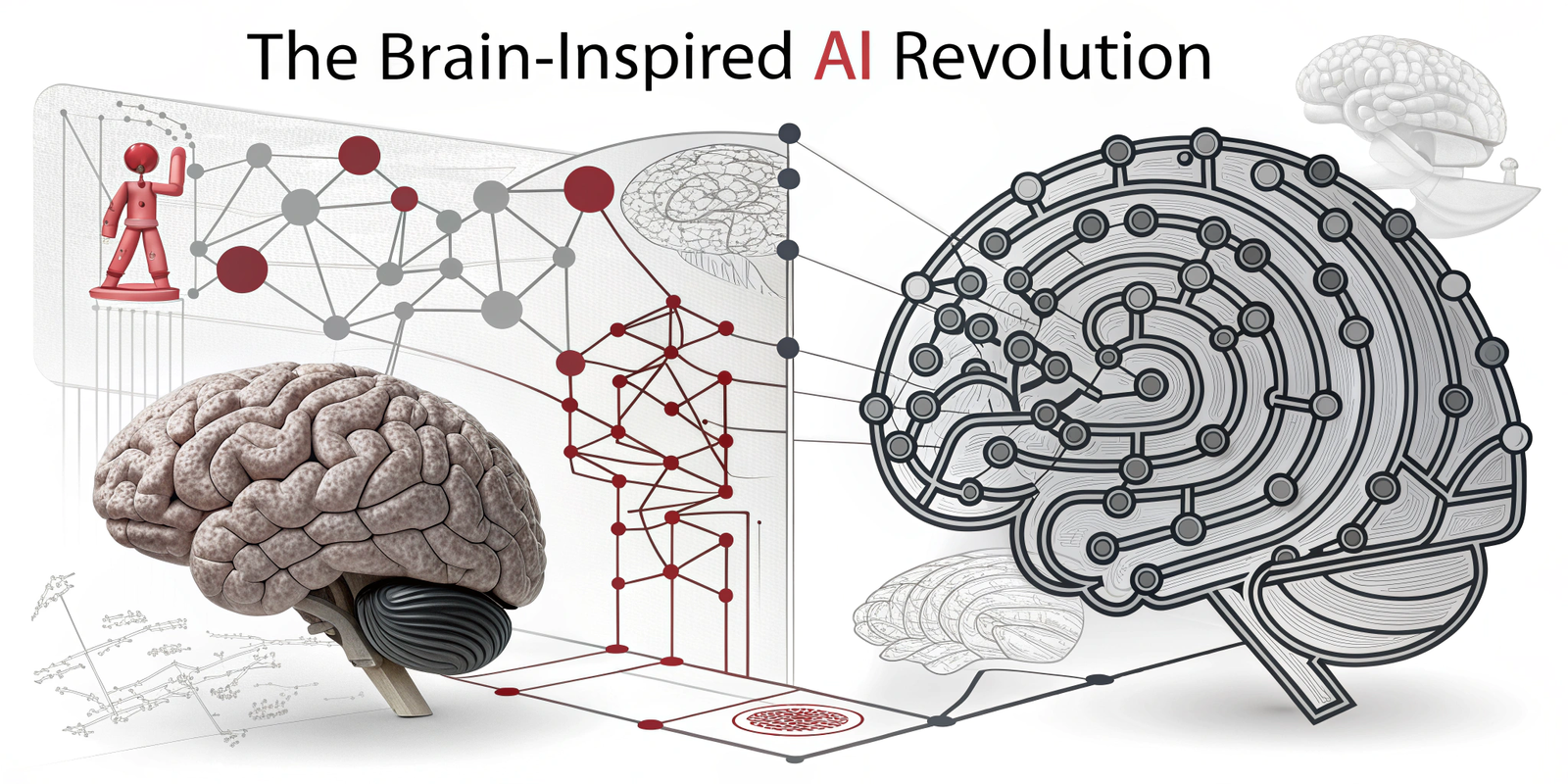

The Brain Doesn’t “Think” in a Straight Line

The researchers behind this paper asked a simple, but essential question: If we’re trying to build human-like intelligence, why are we ignoring the very architecture of the human brain?

Our brains don’t work like a standard computer program, executing one instruction after another. Instead, our thinking is hierarchical and works on multiple timescales at once.

Think about how you solve a complex puzzle, like a Sudoku:

- The CEO (High-Level): You have a slow, deliberate part of your brain that looks at the whole board. It makes abstract plans, like “Okay, I’ll start by focusing on this 3×3 box, because it has the most numbers.” This is your strategic, big-picture thinking. It operates slowly.

- The Expert Team (Low-Level): Then, you have a fast, focused part of your brain that does the rapid calculations. “If this is a 4, then that can’t be a 4, which means this must be a 6…” This is your tactical, detail-oriented thinking. It operates very quickly.

The CEO gives a directive, the expert team works on it rapidly until they hit a wall or solve that part, and then they report back. The CEO then takes this new information and forms a new strategy.

This back-and-forth between slow, abstract planning and fast, detailed computation is what allows us to solve incredibly complex problems.

So, the researchers built an AI model that does exactly that. They called it the Hierarchical Reasoning Model, or HRM.

I don’t know about you, but I wasn’t expecting this result:

Just look at this.

On the right side of that image are the results of some brutally complex tasks. We’re talking about Sudoku-Extreme and Maze-Hard (finding the one perfect path in a giant 30×30 maze). These aren’t your grandma’s newspaper puzzles; they require serious, multi-step reasoning and backtracking.

The big, state-of-the-art models that use Chain-of-Thought? They scored 0.0%—a complete and total failure.

Lol, not even a single correct answer.

The HRM model, which is tiny at only 27 million parameters (for comparison, Llama 3 is 70 billion), absolutely outperformed them. It achieved nearly perfect scores, showcasing its remarkable efficiency.

And get this, it did it with only 1000 training examples. No massive pre-training on the entire internet. It learned how to reason from scratch.

LET… THAT… SINK.. IN..

So, How Does It Actually “Think”?

This is where it gets cool. The reason most AIs fail at deep reasoning is because of a fundamental architectural flaw.

- Standard Transformers (like GPT): They have a fixed number of layers. That means they have a fixed “computational depth.” It’s like having a brain that can only perform, say, 48 steps of thinking before it has to answer. For complex problems, that’s not enough.

- Recurrent Neural Networks (RNNs): These models can theoretically “think” for longer, but they suffer from what I call “running out of steam.” After a few cycles of thinking, their internal state converges, and they stop making meaningful progress.

HRM solves this with something the paper calls “hierarchical convergence.”

Let me show you another image from the paper that explains this better than I ever could.

Okay, don’t get scared by the “forward residuals” label. All it means is “how much is the model’s ‘thought process’ changing at each step?”

- The Middle Graph (Recurrent Neural Net): See how the line quickly drops to zero? That’s the model “running out of steam.” It converges and stops thinking. Game over.

- The Right Graph (Deep Neural Net): This shows “vanishing gradients.” The thinking happens at the beginning and end, but the middle layers aren’t doing much. It’s shallow.

- The Left Graph (HRM): Now, look at this beauty. The blue line (the High-level “CEO”) steadily converges, keeping the overall strategy in mind. But the orange line (the Low-level “expert team”) works intensely, converges on a sub-problem, and then spikes back up. That spike is the CEO giving it a new task! In other words, the model is taking a breath and starting a new phase of intense thinking, over and over again, until the problem is solved.

This is why HRM can think so “deeply.” It never runs out of steam.

You Can Watch It Solve a Puzzle

This isn’t just theory. The researchers visualized the model’s intermediate “thoughts” as it solved a maze.

Look at that progression.

- Timestep 0: It starts with a messy, uncertain idea of the path.

- Timestpes 1–3: It explores multiple routes at once, almost like a wave spreading out. It’s identifying possibilities and dead ends.

- Timesteps 4–6: The model begins cutting off the wrong paths and fine-tuning the right ones, making it cleaner and more direct until it locks onto the optimal solution.

It’s not just spitting out an answer. It’s running a genuine problem-solving process internally. It explores, makes mistakes, corrects them, and refines.

Wow!

Why This Is a Bigger Deal Than You Think

So, a small model can solve Sudoku. Who cares, right?

Wrong. This is a massive deal for three reasons:

- A Path Away from Brute Force: We’ve been told the only way to AGI is by building bigger models and feeding them more data. HRM proves that’s not true. A more innovative, more brain-like architecture can outperform a brute-force giant that’s 1000x its size. This is a massive shift in the AI race.

- Actual Reasoning vs. Pattern Matching: Most LLMs are just incredibly sophisticated pattern-matchers. They’ve seen so much text, they can predict the next word with stunning accuracy. But HRM isn’t just predicting. It’s learning an algorithm. It’s developing a reusable, logical strategy. That’s a step closer to real intelligence.

- Efficiency and Accessibility: Models with 27M parameters can run on a laptop, possibly even a phone. They don’t require massive data centers. This could democratize access to powerful AI reasoning tools that aren’t controlled by a handful of tech giants.

We’re so focused on the LLM hype cycle that we might be missing the real revolution happening in the background. It’s not about making AI that can talk better; it’s about making AI that can think better.

Maybe the path to AGI isn’t about scaling up to infinity.

Maybe it’s about building smarter, more elegant systems.. systems that look a little less like a database and a little more like us.

Share this content: